From Paper to Pipeline: How We Built a Gemini-Powered OCR Engine for Hong Kong's PASP Documents

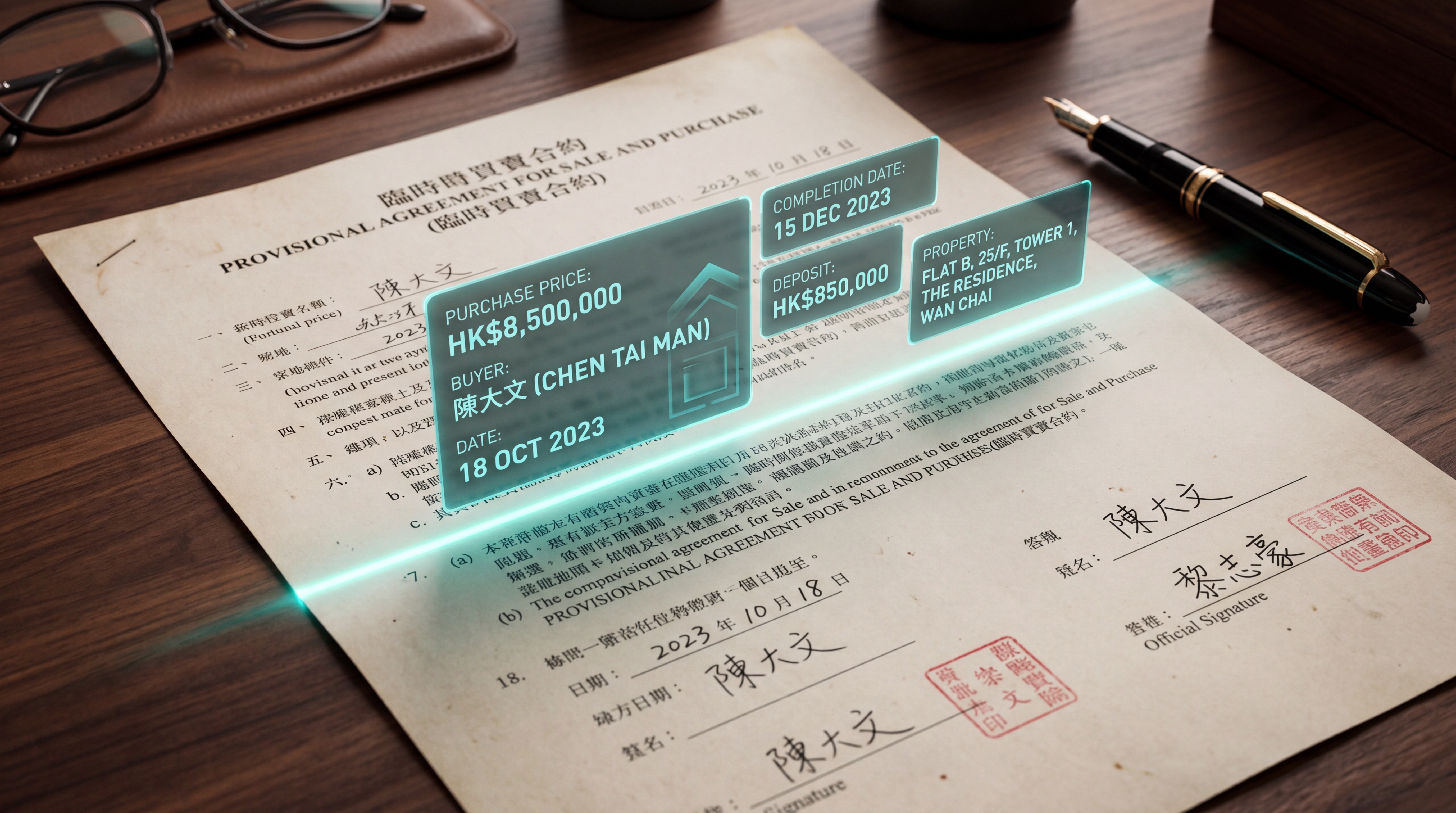

How MAGO AI built a production OCR engine powered by Gemini Vision to automate extraction of Hong Kong's Provisional Agreement for Sale and Purchase (PASP) documents — replacing manual data entry across agencies, law firms, banks, and brokers.

The HK$8 Trillion Paper Problem

Hong Kong's residential property market moves roughly HK$500–800 billion in transaction value every year. Every single one of those deals — from a HK$3M starter flat in Tseung Kwan O to a HK$300M peak-of-Peak mansion — passes through a document called the Provisional Agreement for Sale and Purchase (PASP, 臨時買賣合約).

The PASP is the legal moment a deal becomes real. It locks in price, parties, completion date, deposit terms, and a dozen other clauses that downstream parties — solicitors, banks, mortgage brokers, valuers, stamp duty offices — all need to ingest, verify, and act on within tight timelines.

And yet, in 2026, the dominant workflow at most agencies, banks, and law firms still looks like this: a paper PASP is signed at the agency, scanned (or photographed on a phone), emailed as a PDF to the bank, the lawyer, and the buyer's accountant, then manually re-keyed field by field by a junior staffer into a CRM, mortgage system, or case management tool — and finally cross-checked by someone else against the original.

Multiply that by ~60,000 transactions a year, and you have a multi-million-dollar invisible tax on the entire industry — paid in junior salaries, error-correction overhead, and deals that slip past their critical 14-day stamping deadline.

This is exactly the kind of problem that, until very recently, AI couldn't actually solve. Now it can. Here's how we built it.

Why "Just Use OCR" Doesn't Work for PASPs

When we first scoped this project, the obvious instinct was: "OCR is a 30-year-old solved problem, just throw Tesseract or AWS Textract at it." We tried. It failed. Here's why.

A real-world PASP is a document from hell, by machine-vision standards. It mixes Traditional Chinese, English, and numerals, often in the same field — buyer names like 陳大文 Chan Tai Man sit next to HKID numbers like A123456(7). The forms are pre-printed with handwritten fill-ins: the blank lines are printed, but the actual transaction data is written in pen, sometimes by an agent in a hurry on a clipboard.

Stamps, chops (印章), and signatures overlap with text fields. Tables of optional clauses get ticked ☑ or entire paragraphs crossed out ✗ by hand. Photocopy degradation, phone-camera skew, fluorescent glare, and the classic Hong Kong special — a scan of a scan of a fax — are everyday realities. And layouts vary: Centaline, Midland, Ricacorp, and the small independent agencies all use slightly different PASP templates. Even within one agency, the form changes year to year.

Traditional OCR pipelines — bounding-box detection, then character recognition, then template matching — collapse on roughly 40% of real-world inputs. The "easy" 60% gets extracted, but the operator still has to manually verify every field because they can't trust which 40% failed. Net productivity gain: near zero.

We needed something that could read the document the way a human conveyancing clerk reads it — understanding context, ignoring noise, and knowing the difference between a buyer's HKID and a property's lot number even when both are 8-digit strings two centimeters apart.

Why Gemini Vision Won the Bake-Off

We benchmarked the major vision-language models on a held-out set of 200 real PASPs (anonymized, with client consent). The evaluation criteria were brutally simple: per-field accuracy, end-to-end latency, and cost per document.

Gemini's vision capabilities won on the metric that actually mattered for this use case: structured extraction accuracy on noisy, mixed-language, semi-handwritten Asian-language documents. A few specific reasons stood out.

First, native multimodal grounding. We could pass the entire PASP image alongside a structured JSON schema and a Cantonese-aware system prompt, and get back a typed object — not a wall of text we'd have to post-process with regex. The model "sees" the document and "writes" the JSON in a single forward pass, which means its understanding of layout context (this number is next to "成交價" so it's the purchase price) is preserved end-to-end.

Second, genuinely good Traditional Chinese handwriting recognition. This was the killer. Most Western-trained OCR models treat Chinese handwriting as a long-tail edge case. Gemini handled cursive 行書-style handwritten names and addresses with accuracy we hadn't seen elsewhere — including disambiguating visually similar characters like 戊/戍/戎 in surnames.

Third, long-context tolerance. A PASP plus its standard schedule is often 8–15 pages. We can feed the entire document in one request and let the model reason about cross-page consistency (e.g., the buyer named on page 1 must match the signature block on page 12), instead of stitching fragments together ourselves.

Fourth, a generous output token budget. Returning the full structured object — sometimes 80+ fields including nested arrays for multiple buyers, sellers, and optional clauses — fits comfortably in a single response.

The combination meant we could replace what would have been a four-stage pipeline (detect → OCR → classify → structure) with a single inference call.

Architecture: Boring on Purpose

The system itself is deliberately unglamorous. Boring infrastructure is good infrastructure when the AI layer is doing the heavy lifting.

Ingestion. Documents arrive via three channels: a web upload portal for solicitors and bank operations teams, a WhatsApp Business API endpoint for property agents in the field, and a batch SFTP drop for enterprise clients pushing hundreds of historical PASPs through for digitization. All three normalize to PDF and land in a Cloud Storage bucket.

Pre-processing. A lightweight worker rasterizes PDFs to images, runs basic deskew and contrast normalization, and splits multi-page documents. We deliberately avoid aggressive pre-processing — modern vision models do better with the raw artifact than with an over-cleaned version that's lost subtle cues.

Extraction. The core call: each page (or page group) is sent to Gemini with a system prompt that defines the PASP schema, the bilingual field names, and a short list of edge cases ("if a clause is crossed out, set its status to 'struck'; do not extract its content"). Output is a strict JSON object validated against a Pydantic model.

Confidence scoring and human-in-the-loop. This is where most "AI OCR" startups quietly fall apart. We don't trust any single extraction blindly. For each field, we generate a confidence score using a combination of model self-reporting and a second-pass verification call where Gemini is asked to validate its own output against the source image. Fields below a tunable threshold (typically 0.92 for monetary amounts, 0.95 for HKID numbers) are routed to a lightweight human review UI. Reviewers see the original document region and the extracted value side-by-side, and can correct in one keystroke.

Output. Structured data flows out via webhook to whatever system the client uses — Salesforce, a custom CRM, a law firm's case management tool, or a bank's mortgage origination platform. We also generate a clean, machine-readable JSON archive and an audit log of every field-level decision for compliance purposes.

The whole stack runs on Cloud Run with Firestore for state and a Secret Manager-backed key rotation policy. End-to-end latency for a typical 10-page PASP is under 30 seconds. Cost per document is in the low cents.

What This Actually Unlocks for the Business

It's tempting to frame this as "we automated data entry," but that undersells it. The real story is what becomes possible once PASP data is structured, queryable, and flowing in real time.

For property agencies, every signed deal becomes an instant data asset. Pipeline dashboards update the moment the ink dries, not three days later when someone gets around to keying it in. Commission reconciliation, deal velocity analytics, and team performance reporting stop being month-end fire drills.

For law firms, a conveyancing solicitor can quote, conflict-check, and open a matter file in minutes instead of hours. Stamp duty calculations are pre-populated. Discrepancies between the PASP and the formal Sale and Purchase Agreement get flagged automatically.

For banks and mortgage brokers, the mortgage application process can begin the instant the PASP is signed, not after the buyer manually transcribes the deal terms into an application form. This compresses the funding timeline by days, which directly reduces the bank's pipeline risk and improves the borrower experience.

For valuers and surveyors, comparable transaction databases get populated with structured, verified data instead of scraped approximations.

The aggregate impact across the ecosystem is the kind of efficiency gain that compounds. A 14-day stamping window has a lot more slack when the first three days aren't burned on data entry.

What's Next

We're rolling out the system with a small group of design-partner law firms and mortgage brokers in Hong Kong through Q2 2026. The next phases on our roadmap focus on cross-document reconciliation — automatically matching the PASP against the formal SPA, Land Registry records, and bank valuation reports to surface discrepancies before they become disputes. We're also planning mainland China expansion: the same architecture, retrained on Simplified Chinese 商品房买卖合同 templates, opens a market roughly 50× the size of Hong Kong's. And once we have clean structured data, we can auto-draft generative downstream artifacts — the first version of stamping submissions, mortgage application forms, and conveyancing checklists.

The deeper bet is that vision-language models are about to do for unstructured legal and financial documents what spreadsheets did for accounting in the 1980s — turn an entire category of human grunt work into a software problem. PASPs are just our wedge into that market.

If you're a Hong Kong solicitor, banker, or property platform interested in piloting the system, get in touch.

MAGO AI builds production AI systems for the Hong Kong insurance, financial services, and legal sectors. Reach us at info@magoai.hk.

Ready to reimagineyour core business?

Book a one-on-one dedicated demo to see how MAGO AI can deploy intelligent support for your business in 48 hours, with 24/7 coverage.

Contact Our Team

Please share a few basics so we can get back to you faster and more accurately.